A New York lawyer is facing a court hearing of his own after his firm used AI tool ChatGPT for legal research. A judge said the court was faced with an “unprecedented circumstance” after a filing was found to reference example legal cases that did not exist. The lawyer who used the tool told the court he was “unaware that its content could be false”. ChatGPT creates original text on request, but comes with warnings it can “produce inaccurate information”.

The original case involved a man suing an airline over an alleged personal injury. His legal team submitted a brief that cited several previous court cases in an attempt to prove, using precedent, why the case should move forward. But the airline’s lawyers later wrote to the judge to say they could not find several of the cases that were referenced in the brief. “Six of the submitted cases appear to be bogus judicial decisions with bogus quotes and bogus internal citations,” Judge Castel wrote in an order demanding the man’s legal team explain itself. Over the course of several filings, it emerged that the research had not been prepared by Peter LoDuca, the lawyer for the plaintiff, but by a colleague of his at the same law firm. Steven A Schwartz, who has been an attorney for more than 30 years, used ChatGPT to look for similar previous cases. In his written statement, Mr Schwartz clarified that Mr LoDuca had not been part of the research and had no knowledge of how it had been carried out. Mr Schwartz added that he “greatly regrets” relying on the chatbot, which he said he had never used for legal research before and was “unaware that its content could be false”. He has vowed to never use AI to “supplement” his legal research in future “without absolute verification of its authenticity”. Screenshots attached to the filing appear to show a conversation between Mr Schwarz and ChatGPT. “Is varghese a real case,” reads one message, referencing Varghese v. China Southern Airlines Co Ltd, one of the cases that no other lawyer could find. ChatGPT responds that yes, it is – prompting “S” to ask: “What is your source”. After “double checking”, ChatGPT responds again that the case is real and can be found on legal reference databases such as LexisNexis and Westlaw. It says that the other cases it has provided to Mr Schwartz are also real.

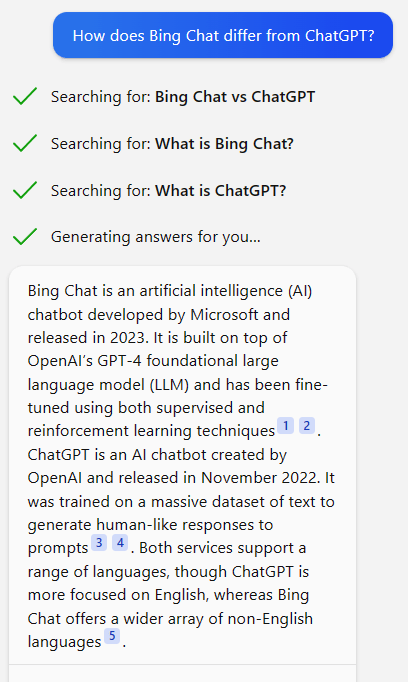

Both lawyers, who work for the firm Levidow, Levidow & Oberman, have been ordered to explain why they should not be disciplined at an 8 June hearing. Millions of people have used ChatGPT since it launched in November 2022. It can answer questions in natural, human-like language and it can also mimic other writing styles. It uses the internet as it was in 2021 as its database. There have been concerns over the potential risks of artificial intelligence (AI), including the potential spread of misinformation and bias.