The following is excerpted from “Some Lawyers Are People Too!” by Hugh L. Dewey, Esq. (2009).

Legal anthropologists have not yet discovered the proverbial first lawyer. No briefs or pleadings remain from the proto-lawyer that is thought to have been in existence more than 5 million years ago.

Chimpanzees, man’s and lawyer’s closest relative, share 99% of the same genes. New research has definitely proven that chimpanzees do not have the special L1a gene that distinguishes lawyers from everyone else. (See Johnson, Dr. Mark. “Lawyers in the Mist?” Science Digest, May 1990: pp. 43-52.) This disproved the famous outcome of the Scopes Monkey Trial in which Clarence Darrow proved that monkeys were also lawyers.

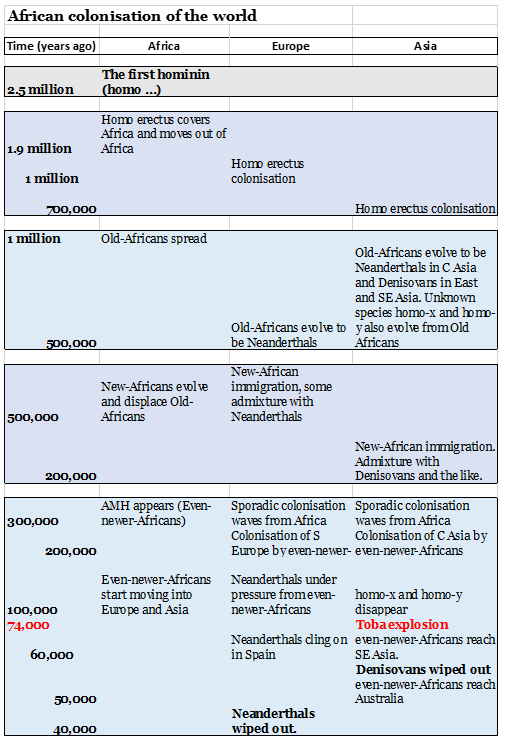

Charles Darwin, Esquire, theorized in the mid-1800s that tribes of lawyers existed as early as 2.5 million years ago. However, in his travels, he found little evidence to support this theory.

Legal anthropology suffered a setback at the turn of the century in the famous Piltdown Lawyer scandal. In order to prove the existence of the missing legal link, a scientist claimed he had found the skull of an ancient lawyer. The skull later turned out to be homemade, combining the large jaw of a modern lawyer with the skull cap of a gorilla. When the hoax was discovered, the science of legal anthropology was set back 50 years.

The first hard scientific proof of the existence of lawyers was discovered by Dr. Margaret Leakey at the Olduvai Gorge in Tanzania. Her find consisted of several legal fragments, but no full case was found intact at the site. Carbon dating has estimated the find at between 1 million and 1.5 million years ago. However, through legal anthropology methods, it has been theorized that the site contains the remains of a fraud trial in which the defendant sought to disprove liability on the basis of his inability to stand erect. The case outcome is unknown, but it coincides with the decline of the Australopithecus and the rise of Homo Erectus in the world. (See Leakey, Margaret A. “The case of erectus hominid.” Legal Anthropology, March 1947: pp. 153.)

In many sites dating from 250,000 to 1,000,000 years ago, legal tools have been uncovered. Unfortunately, the tools are often in fragments, making it difficult to gain much knowledge.

The first complete site discovered has been dated to 150,000 years ago. Stone pictograph briefs were found concerning a land boundary dispute between a tribe of Neanderthals and a tribe of Cro-Magnons. This decision in favor of the Cro-Magnon tribe led to a successive set of cases, spelling the end for the Neanderthal tribe. (See Widget, Dr. John B. “Did Cro-Magnon have better lawyers?” Natural History, June 1926: p. 135. See also Cook, Benjamin. Very Very Early Land Use Cases. Legal Press, 1953.)

Until 10,000 years ago, lawyers wandered around in small tribes, seeking out clients. Finally, small settlements of lawyers began to spring up in the Ur Valley, the birthplace of modern civilization. With settlement came the invention of writing. Previously, lawyers had relied on oral bills for collection of payment, which made collection difficult and meant that if a client died before payment (with life expectancy between 25 and 30 and the death penalty for all cases, most clients died shortly after their case was resolved), the bill would remain uncollected. With written bills, lawyers could continue collection indefinitely.

In the late 1880s, legal anthropologists cracked the legal hieroglyphic language when they were able to determine the meaning of the now famous Rosetta Stone Contract. (See Harrison, Franklin D. The Rosetta Bill. Doubleday, 1989.) The famous first paragraph can be recited verbatim by almost every lawyer:

“In consideration of 20,000 Assyrians workers, 3,512 live goats, and 400,000 hectares of dates, the undersigned hereby conveys all of the undersigned’s right, title, and interest in and to the property commonly known as the Sphinx, more particularly described on Stone A attached hereto and made a part hereof.”

The attempted sale of the Sphinx resulted in the Pharaoh issuing a country-wide purge of all lawyers. Many were slaughtered, and the rest wandered in the desert for years looking for a place to practice.

Greece and Rome saw the revival of the lawyer in society. Lawyers were again allowed to freely practice, and they took full advantage of this opportunity. Many records exist from this classic period. Legal cases ranged from run-of-the-mill goat contract cases to the well-known product liability case documented in the Estate of Socrates vs. Hemlock Wine Company. (See Wilson, Phillips ed. Famous Roman Cases. Houghton, Mifflin publishers, 1949.)

The most famous lawyer of this period was Hammurabi the Lawyer. His code of law gave lawyers hundreds of new business opportunities. By creating a massive legal system, the demand for lawyers increased ten-fold. In those days, almost any thief or crook could kill a sheep, hang-up a sheepskin, and practice law, unlike the highly regulated system today which limits law degrees to only those thieves and crooks who haven’t been convicted of a major felony.

The explosion in the number of lawyers coincided with the development of algebra, the mathematics of legal billing. Pythagoras, a famous Greek lawyer, is revered for his Pythagorean Theorem, which proved the mathematical quandary of double billing. This new development allowed lawyers to become wealthy members of their community, as well as to enter politics, an area previously off-limits to lawyers. Despite the mathematical soundness of double billing, some lawyers went to extremes. Julius Caesar, a Roman lawyer and politician, was murdered by several clients for his record hours billed in late February and early March of 44 B.C. (His murder was the subject of a play by lawyer William Shakespeare. When Caesar discovered that one of his murderers was his law partner Brutus, he murmured the immortal lines, “Et tu Brute,” which can be loosely translated from Latin as “my estate keeps twice the billings.”)

Before the Roman Era, lawyers did not have specific areas of practice. During the period, legal specialists arose to meet the demands of the burgeoning Roman population. Sports lawyers counseled gladiators, admiralty lawyers drafted contracts for the great battles in the Coliseum, international lawyers traveled with the great Roman armies to force native lawyers to sign treaties of adhesion — many of which lasted hundreds of years until they were broken by the barbarian lawyers who descended on Rome from the North and East — and the ever-popular Pro Bono lawyers (Latin for “can’t get a real job”) who represented Christians and lost all their cases for 300 years.

As time went on, the population of lawyers continued to grow until 1 out of every 2 Romans was a lawyer. Soon lawyers were intermarrying. This produced children who were legally entitled to practice Roman law, but with the many defects that such a match produced, the quality of lawyers degenerated, resulting in an ever-increasing defective legal society and the introduction of accountants. Pressured by the legal barbarians from the North with their sign-or-die negotiating skills, Rome fell, and the world entered the Dark Ages.

During the Dark Ages, many of the legal theories and practice developed during the golden age were forgotten. Lawyers lost the art of double billing, the thirty-hour day, the 15-minute phone call, and the conference stone. Instead, lawyers became virtually manual laborers, sharing space with primitive doctor-barbers. Many people sought out magicians and witches instead of lawyers since they were cheaper and easier to understand.

The Dark Ages for lawyers ended in England in 1078. Norman lawyers discovered a loophole in Welsh law that allowed William the Conqueror to foreclose an old French loan and take most of England, Scotland, and Wales. William rewarded the lawyers for their work, and soon lawyers were again accepted in society.

Lawyers became so popular during this period that they were able to heavily influence the kings of Britain, France, and Germany. After a Turkish corporation stiffed the largest and oldest English law firm, the partners of the firm convinced these kings to start a Bill Crusade, sending collection knights all the way to Jerusalem to seek payment.

A major breakthrough for lawyers occurred in the 17th century. Blackstone the Magician, on a trip through Rome, unearthed several dozen ancient Roman legal texts. This new knowledge spread through the legal community like the black plague. Up until that point, lawyers used the local language of the community for their work. Since many smart non-lawyers could then determine what work, if any, the lawyer had done, lawyers often lost clients, and sometimes their head.

Using Blackstone’s finds, lawyers could use Latin to hide what they did so that only other lawyers understood what was happening in any lawsuit. Blackstone was a hero to all lawyers until, of course, he was sued for copyright infringement by another lawyer. Despite his loss, Blackstone is still fondly remembered by most lawyers as the father of legal Latin. “Res ipsa loquitur” was Blackstone’s favorite saying (“my bill speaks for itself”), and it is still heard today.

Many lawyers made history during the Middle Ages. Genghis Kahn, Esq., from a family of Jewish lawyers, Hun & Kahn, pioneered the practice of merging with law offices around Asia Minor at any cost. At one time, the firm was the largest in Asia and Europe. Their success was their downfall. Originally a large personal injury firm (if you didn’t pay their bill, they personally injured you), they became conservative over time and were eventually overwhelmed by lawyers from the West. Vlad Dracul, Esq., a medical malpractice specialist, was renowned for his knowledge of anatomy, and few jurors would side against him for fear of his special bill (his bill was placed atop 20-foot wooden spears on which the non-paying client was placed).

Leonardo di ser Piero da Vinci, Esq., was multi-talented. Besides having a busy law practice, he was an artist and inventor. His most famous case was in defense of himself. M. Lisa vs. da Vinci (Italian Superior Court 1513) involved a product liability suit over a painting da Vinci delivered to the plaintiff. The court, in ruling that the painting was not defective despite the missing eyebrows, issued the famous line, “This court may not know art, but it knows what it likes, and it likes the painting.” This was not surprising since the plaintiff was known for her huge, caterpillar-like eyebrows. Da Vinci was able to convince the court that he was entitled not only to damages but to attorneys’ fees, costs, and punitive damages as well. The court, taking one last look at the plaintiff, granted the request.

A land dispute case in the late 15th century is still studied today for the clever work of Christopher Columbus, Esq. He successfully convinced an Aztec court, in Columbus vs. 1,000,000 Acres that since the Indians did not believe in possession, they could not claim the land in question. Therefore, his claim had to be given priority. Despite the fact that the entire court was sacrificed to the gods, the case held and Spain took an early legal lead in the New World.

As the New World was colonized, England eventually surpassed Spain as the leading colonizer. England began sending all of its criminals and thieves to the New World. This mass dumping of lawyers to the states would come back to haunt England. Eventually, the grandchildren of these pioneer lawyers would successfully defeat King George III in the now famous King George III v. 100 Bags of Tea. England by this time was now dreadfully short of lawyers. The new American lawyers exploited this shortfall and, after a seven-year legal war, defeated the British and created the United States, under the famous motto, “All lawyers are created equal.”

England never forgot this lesson and immediately stopped its practice of sending lawyers to the colonies. This policy left Australia woefully deficient in lawyers.

With stories of legal success common in the late 1700s, more and more people attempted to become lawyers. This process of stealing a shingle worried the more successful lawyers. To stem this tide as well as to create a new profit center, these lawyers passed laws requiring all future lawyers to be restricted from practice unless they went to an approved law school. The model school from which all legal education rules developed was Harvard Law School.

Harvard, established in 1812, set the standard for legal education when, in 1816, it created the standardized system for legal education. This system was based on the Socratic method. At most universities, the students questioned the teacher/professor to gain knowledge. These students would bill their professors, and if the bill went unfulfilled, the students usually hung up their law professor for failure of payment At Harvard, the tables were turned, with the professors billing the students. This method enriched the professors and remains the standard in use in most law schools in America and England.

As developed by Harvard, law students took a standard set of courses as follows:

- Jurisprudence: The history of legal billing, from early Greek and Roman billing methods to modern collection techniques.

- Torts: French law term for “you get injury, we keep 40%.” Teaches students ambulance-chasing techniques.

- Contracts: Teaches that despite an agreement between two parties (the contract), a lawsuit can still be brought.

- Civil Procedure: Teaches the tricky arcane rules of court, which were modernized only 150 years ago in New York.

- Criminal Law: Speaks for itself.

These courses continue to be used in most law schools throughout the United States.

Despite the restrictions imposed on the practice of law (a four-year college degree, three years of graduate school, and a state-sponsored examination), the quantity of lawyers continues to increase to the point that three out of every five Americans are lawyers. (In fact, there are over 750,000 lawyers in this country.) Every facet of life today is controlled by lawyers. Even Dan Quayle (a lawyer) claims, surprise, that there are too many lawyers. Yet until limits are imposed on legal birth control, the number of lawyers will continue to increase. Is there any hope? We don’t know and frankly don’t care since the author of this book is a successful, wealthy lawyer, the publishers of this book are lawyers, the cashier at the bookstore is a law student, and your mailman is a lawyer. So instead of complaining, join us and remember, there is no such thing as a one-lawyer town.

Lawyers are members of a parasitic life-form which emerges in the cracks of human society.